Defensibility in the Age of AI (2026)

What happens when the big AI model providers keep pushing deeper into the application layer? How defensible are vertical AI solutions?

Leonard Wossnig

Co-Founder and CTO

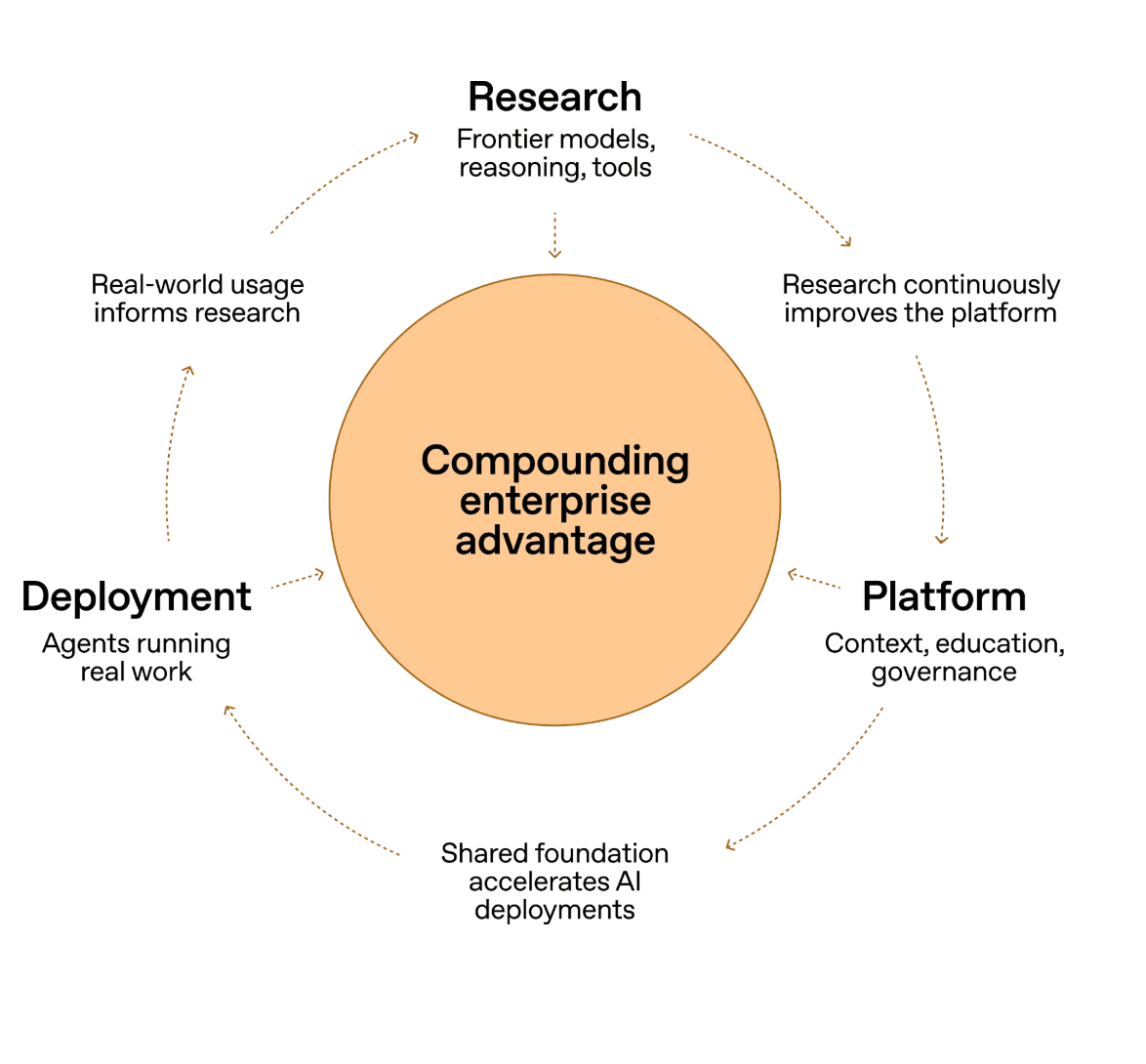

OpenAI Frontier is aiming to become the AI team member for the Enterprise. It is OpenAI’s reaction to Claude Co-Work, which was recently celebrated across the media as a true automation tool for business users.

I recently spoke to a friend, Bowen, a founder who was previously an investor at the US VC firm a16z. He asked me what our company’s moats are. The day after, Christie, another founder I met over coffee, asked the same question in a different guise: “how do you think about defensibility?”

These conversations are not isolated. The topic keeps surfacing in the startup and VC world because of the fast advances we are seeing with AI coding agents and the general capability gains from the large model providers. From simple coding tasks to very complex knowledge work, we have watched the foundation model providers move progressively closer to the application layer, first through coding tools and more recently through knowledge work such as finance, legal, and accounting, with products like Claude Cowork. OpenAI’s release of Frontier today is the latest move in the same direction, and it makes the question of defensibility feel more pressing than ever.

OpenAI Frontier positions itself as a complete platform for the enterprise needs.

The numbers make the stakes concrete. Anthropic’s release of the Claude Cowork legal plugin on 2 February 2026 wiped roughly $285 billion in market value from software and legal technology companies in a single session, with Thomson Reuters falling 16%, RELX 14%, and Wolters Kluwer 13%. Shortly after, Anthropic raised $30 billion at a $380 billion valuation, which makes it clear that the company has the capital to pursue vertical market entry at scale. Against that backdrop, the defensibility question is no longer academic. It is particularly critical for AI application startups built on top of foundation model capabilities, where both the rapid change of the underlying technology and the rapid advance of the competition from the model providers themselves mean startups have to go faster than ever.

In the following, I want to lay out how I think about moats for B2B AI applications in 2026: what is being eaten, what is not, and what we founders can do about it. Note: Even though AI changes some things, a lot of the old fundamentals still remain true.

What the foundation model layer is eating

Model providers are progressively taking more of the application layer. This is no longer up for debate. They have absorbed a large chunk of software engineering already, and more recently the lower end of legal, auditing, and other knowledge work.

This is easy for them to do because there is a lot of low-hanging fruit: knowledge work that is common, repeatable, and of limited complexity. Writing a standard NDA falls squarely into this bracket. Case law, where content has been collected, curated, and editorially validated over decades, does not.

Three further dynamics accelerate this. The first is Skills. Anthropic has designed its product to be easily extensible in natural language, and Skills let any user define and refine a process through simple, repeatable instructions that can be shared across an organisation. Where the stakes are not too high, a responsible person can write a Skill and share it with the team, and the general-purpose tool is adapted for the organisation without any integration work. It is not much more complex than mentoring a junior employee, and thanks to the iterative feedback loop in the chat interface, anyone can refine it until it works as they want. In a way, this is real-world reinforcement learning.

What makes Skills significant is what they displace. In the past, this kind of adaptation required forward-deployed engineers or professional services budgets, which is still how most legacy enterprise software gets adapted today. Now anyone in the organisation can do it. That shift is arguably one of the biggest differentiators between AI-native platforms and the legacy software stack they are moving to replace.

The second is the combination of agents and connectors. Agents can reason over unstructured data directly, which removes a class of work that vertical SaaS has historically had to do on behalf of the customer: data modelling, cleaning, schema design, ETL, and the general plumbing of getting information into a usable shape. At the same time, the model providers already ship native connectors to the places enterprise data actually lives. Claude Cowork connects to Google Drive, Gmail, and DocuSign out of the box, and similar connectors exist for SharePoint and the rest of the Microsoft 365 stack. The combination means that a general-purpose agent can sit on top of a customer’s existing document estate and do useful work, without the customer having to export data, map it into a new schema, or stand up a dedicated integration. The implicit data-access moat that a lot of vertical tools quietly relied on starts to look thinner in that light.

The third is the enterprise distribution push. In March 2026, Anthropic committed $100 million to a Claude Partner Network that brought Accenture, Deloitte, Cognizant, and Infosys into its enterprise ecosystem. This is a new and underdiscussed threat vector. For any AI application startup selling into consulting firms, global SIs, or large enterprises, the foundation model provider can now reach your customers through the same partners that previously sold your product. It is distribution cut directly into the largest buyers of vertical AI software, and together with Skills and the agent-plus-connector stack it compresses what used to be three distinct application-layer advantages: adaptation, data access, and distribution.

There is, of course, a limit to what can be absorbed. Complexity of workflow is currently one boundary. Many human knowledge workflows cannot yet be translated into a simple Skill, partly due to model limitations, partly due to how models are integrated and used by companies and users, and partly due to the information and actions the models can access. I expect this boundary to shift over time, especially for verifiable work (where success can be measured against a clear outcome), and the generation of Skills will likely become more automated for such tasks. For non-verifiable work, agents and Skills are less likely to fully automate the task, and that perhaps constitutes a small moat.

What the foundation model layer is not eating

A recent Fortune piece discussed the model provider advances in the context of legal AI. It is worth flagging upfront that the piece is authored by a Thomson Reuters executive, so the framing is directionally self-serving, but the underlying argument is still useful. The article argues that Claude Cowork, Harvey, Legora, and Libra actually operate in the same category of legal AI: the “non-authoritative layer”, which covers tasks like standardising formatting, reviewing internal documents, and managing operational workflows (what the article calls operational legal tasks). Foundation models handle this layer well.

Where the legal AI companies still maintain an edge is through proprietary data infrastructure (Westlaw, LexisNexis, Wolters Kluwer) that has been curated, validated, and editorially maintained over decades. Foundation models do not replace that infrastructure. A complementary angle from the HAQQ blog makes a similar point from outside the incumbent camp: Claude’s plugin did not kill legal tech, it exposed which layers of the stack had no defence.

This brings us to the first moat that will still remain true: applications built on genuinely unique or hard-to-acquire data will remain defensible, with one caveat I return to below.

A part of unique data is also what people often talked about as data flywheels. I would call this out as distinct from network effects (discussed later), although the two are often conflated. The data that users generate on a platform (past proposals, won bids, qualification signals, feedback on tender fit, or even user preferences and rules) compounds into better outcomes for those same users on the platform, which creates a switching cost rooted in outcomes rather than habit. This is a data moat, and for vertical AI applications today it is one of the more durable ones as long as the data is owned by the company (not the user) and directly improves the outcome for the user.

A caveat on the proprietary data thesis: the data moat is weakening, not strengthening. This is not a new observation. a16z argued in 2024 that data is rarely a strong enough moat on its own for enterprise startups, and Sequoia made a similar point when they wrote that data moats are on shaky ground and that workflows and user networks are the more durable sources of competitive advantage.

Three forces are eroding the advantage of curated corpora faster than most people acknowledge: synthetic data, verifiable-reward RL, and direct licensing deals. Some proprietary datasets will turn out to be reconstructable at sufficient scale, and model providers are increasingly willing to license what they lack. What looks like a moat today may turn out to have been only a lead time in three years.

The corollary is simple: data alone is not enough.

Beyond data, I see three further moats that are commonly talked about and might remain in the world of AI:

Reference sales. Being first into a market and landing credible logos creates a trust gradient that competitors have to climb. In B2B, new buyers disproportionately follow what their peers have done, and reference customers compress sales cycles. This is real, but it is a delay rather than a durable moat. The moment a well-funded competitor lands a bigger logo in the same segment, the reference advantage compresses. Speed to market matters, and being first reinforces this moat.

A friend of mine, Joachim, an engineering manager at Legora, sharpened this for me over coffee recently. His observation was that the users you win through reference sales rarely stay neutral. They build workflows on top of your product, and that anchoring cuts both ways: competitors find it harder to rip you out, but you also find it harder to outrun them, because your own users are tied to what you have already shipped. This is especially pronounced when your customers are large, slow legacy organisations whose processes are built around your existing solution. Being first becomes a double-edged sword, and the stickier your reference customers are, the sharper the edge.

That stickiness is where reference sales start to blur into the next moat.

Network effects. These are the stickier ones, and in the long term I think they are the most durable of the non-data moats. In public procurement specifically, the interesting network effects are the sub-contractor networks and bidder consortia that form around winning teams. Once a prime contractor, specialist sub-contractor, and consortium partner have worked together on a platform, the cost of moving that entire group to a competitor is meaningfully higher than the cost of moving any single company. These are real two-sided effects and they take years to build. More broadly, moving whole companies to a different platform (even one that is cheaper or marginally better) remains hard at scale. I expect this to hold for some time — at least as long as humans, and not agents, are still making the buying decisions.

Another moat that might remain is taste and good product judgement. This is the last one I want to call out explicitly, and it connects to what I wrote in my previous article on AI agents in software engineering. As implementation gets cheaper, the bottleneck shifts to product discovery and judgement: knowing what to build, for whom, and how to sequence it. Harvey’s Gabe Pereyra has argued the same shift is now playing out in legal, where execution throughput has stopped being the constraint and organisations have to reorganise around review, coordination, and judgement instead. This is hard to automate because it depends on closeness to the problem, iteration with real users, and the accumulation of opinions about what good looks like in a specific domain. I believe this is the most underrated moat for application-layer companies in 2026, precisely because it cannot be replicated by a Skill, a plugin, or a better model. The companies that compound taste will out-build the ones that compound features.

There is an obvious objection here. Figuring out what to build is now the most important and most expensive work, but once you have figured it out, a competitor can simply copy the answer. This is true of any individual decision. If you ship a feature that lands, a fast follower will replicate it within months without paying the discovery cost you paid to get there. What they cannot copy as easily is the ongoing capability to make the next decision well, which sits in the institutional knowledge of a team that has been close to the problem for years. Followers copy answers. The judgement that produces the answers is what compounds.

Everything else — speed, integration depth, and feature parity — is a short-term advantage at best. Agentic coding workflows are collapsing the cost of building integrations and catching up on features, and a two-year lead measured in shipped functionality is no longer what it was in 2022. Any moat that relies on “we have more of X than them” should be assumed to compress. What compounds is the combination of proprietary data with workflows, relationships, network effects, and taste.

The harder question

There is a sharper version of the defensibility question that I think this kind of analysis tends to dodge, and it is worth taking on directly. The real threat is probably not the foundation model provider. They are large, well-capitalised, and their moves are visible in advance. The Partner Network, Skills, and the Cowork verticals are all predictable threats, and predictable threats are the easier kind to plan around.

The harder threat is the next application-layer startup built two years after yours, on cheaper inference, better tooling, and a five-person agentic engineering team that ships as fast as your team of eighteen. The barriers to building a well-integrated vertical application are collapsing for everyone, which includes the competitor that does not yet exist. In that framing, “we are two years ahead on features” or “we have the deepest integrations” are not moats, they are the exact advantages the next entrant will replicate more quickly than the last entrant could.

This sharpens rather than contradicts the earlier argument. It is precisely why proprietary data that compounds, network effects that take years to build, and taste that is accumulated rather than copied are the categories worth focusing on. Those are the moats a newcomer cannot work their way around with better model capabilities, cheaper inference, or even copying your product. Features, speed, and integration count are all advantages they can replicate.

What founders should do

The question of buy vs build will resurface as AI adoption gets easier. “Just write a Skill and share it with the team” is a powerful proposition, but writing and maintaining Skills takes time, and proper evaluation is still hard (you need the infrastructure, the data, and the ability to run proper tests). Maintenance costs of shared Skills in large organisations will compound on top. For many businesses it will not be worth doing in-house because writing Skills is not their core business. So a marketplace for Skills will emerge, along with specialised software that either offers these Skills and workflows directly, or wraps them into deeper vertical products.

This will be particularly true in more niche markets, including local European legal work, public procurement, and other verticals where the model providers will focus on the US market and the highest-volume use cases first. Not everything will immediately be eaten by the big providers.

If you are building an AI application company in 2026, focus on building a great product and stick to the old fundamentals: a durable combination of proprietary data, network or flywheel effects, and a team with genuine taste for the problem. Everything else compresses.

Insights

Concept-Based Tenders in Architecture: TED Market Data and Strategies for VgV Procedures 2026

How to win a concept-based tender in architecture: 2025 TED data on VgV procedures, contract values, and success factors at a glance.

Felicitas von Rauch

Insights

Evaluation Matrix in the Tender: Systematically Decoding Award Criteria

How Bid Managers strategically read a tender evaluation matrix. Practical guide to award criteria, weighting, and concept structure.

Felicitas von Rauch

Insights

What is a Bid Manager? Tasks, Tools, and Career Paths in Daily Procurement

Which Bid Manager tasks shape daily procurement: Requirements profile, salary, career paths, and tools for public tenders in detail.

Felicitas von Rauch